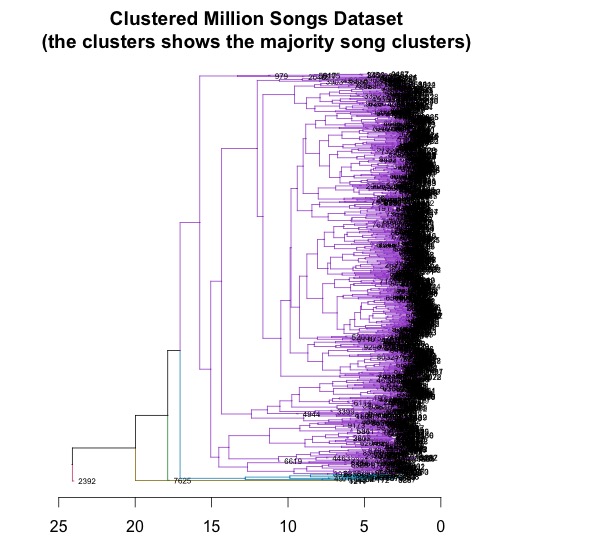

Million song datase

The mapper breaks the line into a set of words and emits a word count of 1 for each word that it finds. The input is presented to the mapper function, one line at a time. Here’s the classic word counter example written with mrjob: from mrjob.job import MRJob Writing an mrjob MapReduce task couldn’t be easier. When your mrjob is ready, you can then launch it on a Hadoop cluster (if you have one), or run the job on 10s or even 100s of CPUs using Amazon’s Elastic MapReduce. With mrjob you can write a MapReduce task in Python and run it as a standalone app while you test and debug it. There’s a nifty MapReduce Python library developed by the folks at Yelp called mrjob. There are a number of implementations of MapReduce including the popular open sourced Hadoop and Amazon’s Elastic MapReduce. With MapReduce you specify a map function that processes a key/value pair to generate a set of intermediate key/value pairs, and a reduce function that merges all intermediate values associated with the same intermediate key. MapReduce is a programming model developed by researchers at Google for processing and generating large data sets. Luckily, a number of scalable programming models have emerged in the last decade to make tackling this type of problem more tractable. This approach, although simple, will not scale very well as the number of tracks or the complexity of the per track calculation increases. For this experiment I’ll calculate the density of all 1 million songs and find the most dense and the least dense songs.Ī traditional approach to processing a set of tracks would be to iterate through each track, process the track, and report the result.

We should expect that high density songs will have lots of activity (as an Emperor once said “too many notes”), while low density songs won’t have very much going on. In the above graph the audio signal (in blue) is divided into about 18 segments (marked by the red lines). Each segment represents a rich and complex and usually short polyphonic sound. An onset detector is used to identify atomic units of sound such as individual notes, chords, drum sounds, etc. The set of segments for a track is already calculated in the MSD. To calculate the density we just divide the number of segments in a song by the song’s duration. One easy calculation is to determine each song’s density – where the density is defined as the average number of notes or atomic sounds (called segments) per second in a song. From 'Creating Music by Listening' by Tristan Jehanįor this first experiment in processing the million song data set I want to do something fairly simple and yet still interesting.